December 10th, 2021

Cloud Composer – Terraform Deployment

by Patryk Golabek in Data-Driven, Technology

December 10th, 2021

by Patryk Golabek in Data-Driven, Technology

At Translucent Computing, we are continuously examining our DevSecOp processes to deliver better value to our customers. Google Cloud Composer is managed Apache Airflow service that provides speed of deployment and enterprise security, leading to providing more efficient service to our clients by focusing on delivering the business value from data pipelines executed in Apache Airflow and spending less time on the infrastructure deployment and management. Here we examine the deployment of Cloud Composer with Terraform.

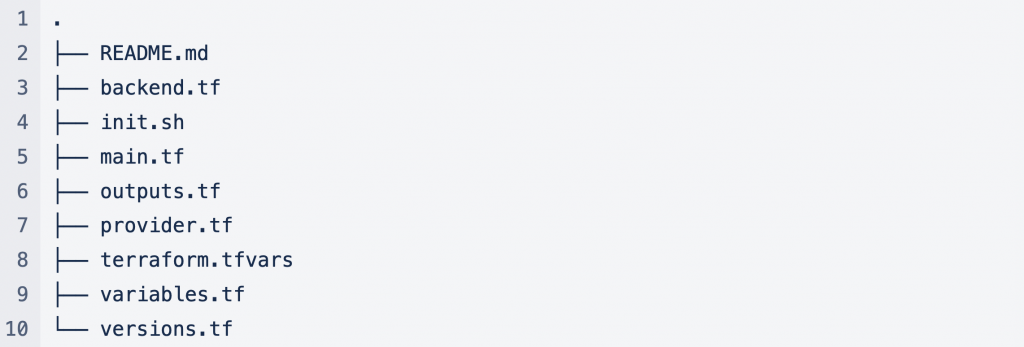

List of files used in this deployment.

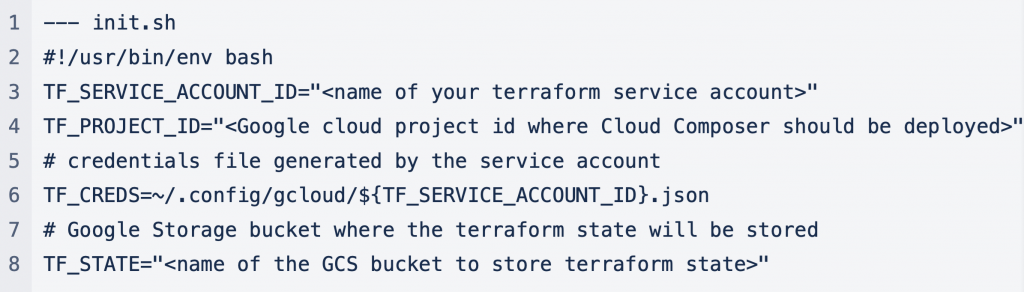

We start with configuring a service account for the Terraform script execution. Kicking off a Bash script with variables that are required by the gcloud commands.

We can create the service account and credentials file using the above parameters and assign the required roles to the service account.

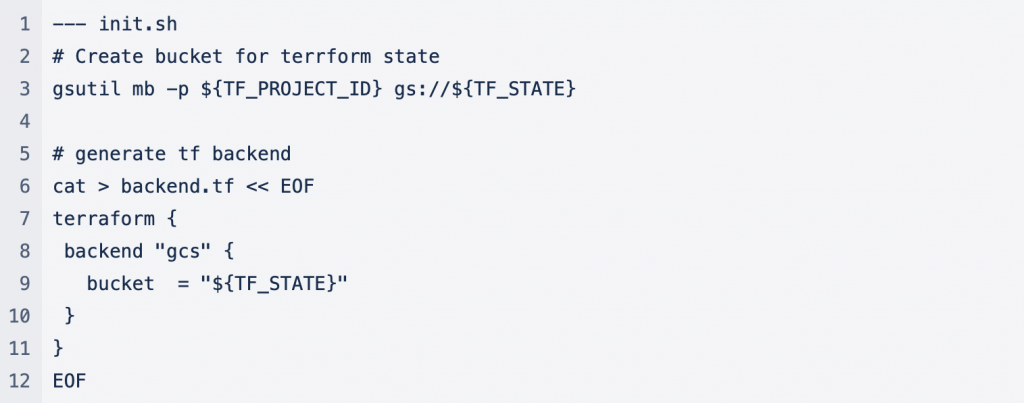

Since we also have chosen to use Google Cloud Storage as our backend to store the Terraform state, we create a bucket in Google Cloud Storage and generate a backend Terraform file.

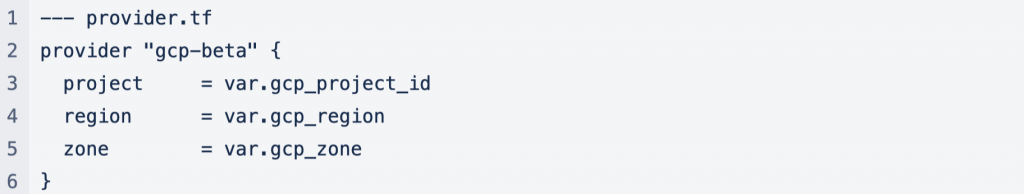

This initial configuration is enough to use Terraform for the rest of the deployment. Google provides Terraform providers for the Google Cloud and Google Cloud Composer. Since we have transitioned to Google Cloud Composer 2 which provides benefits of Google GKE Autopilot which is designed to reduce the operational cost, we have to use the Google beta Terraform provider.

We next configure the provider.

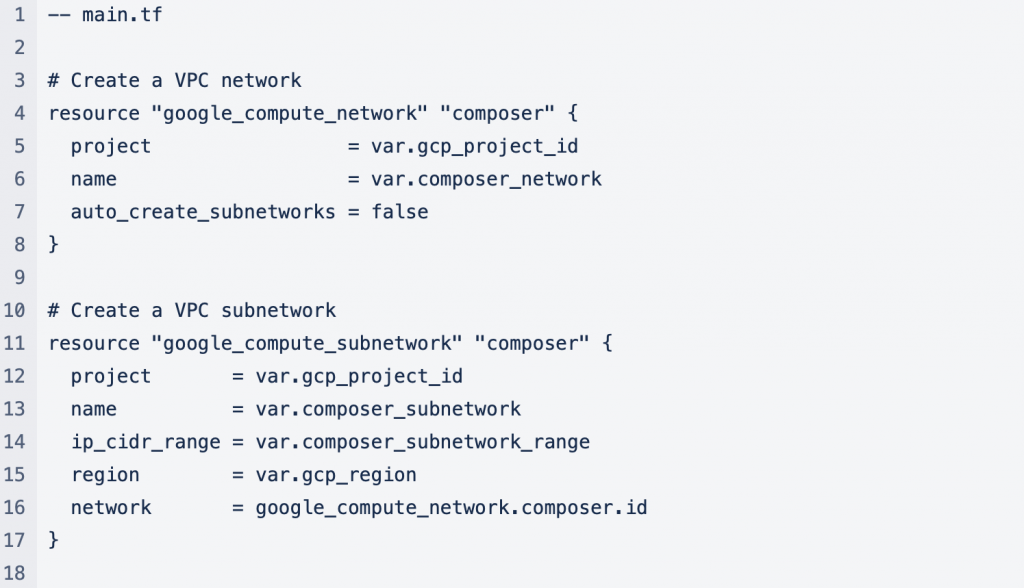

With backend.tf generated and the provider configured we can focus on the deployment of Cloud Composer. We start by defining the supporting resources that are required by Cloud Composer.

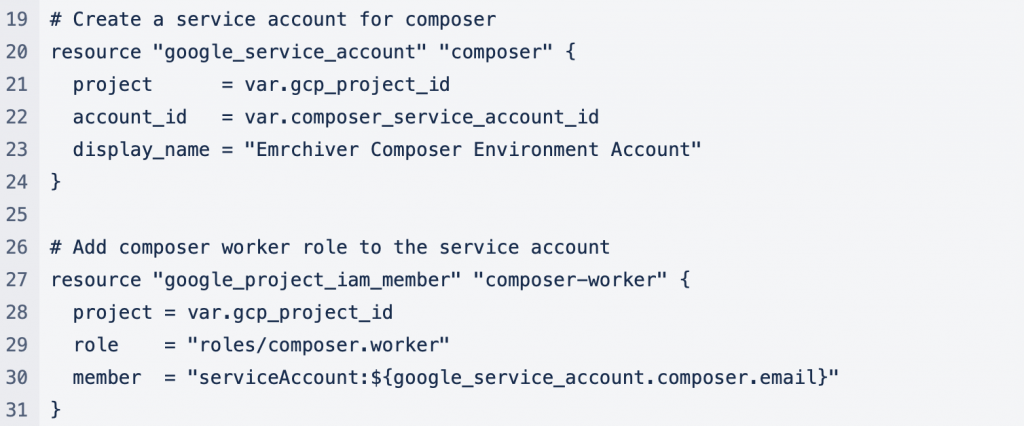

We can now define the main Terraform resource that creates the Cloud Composer.

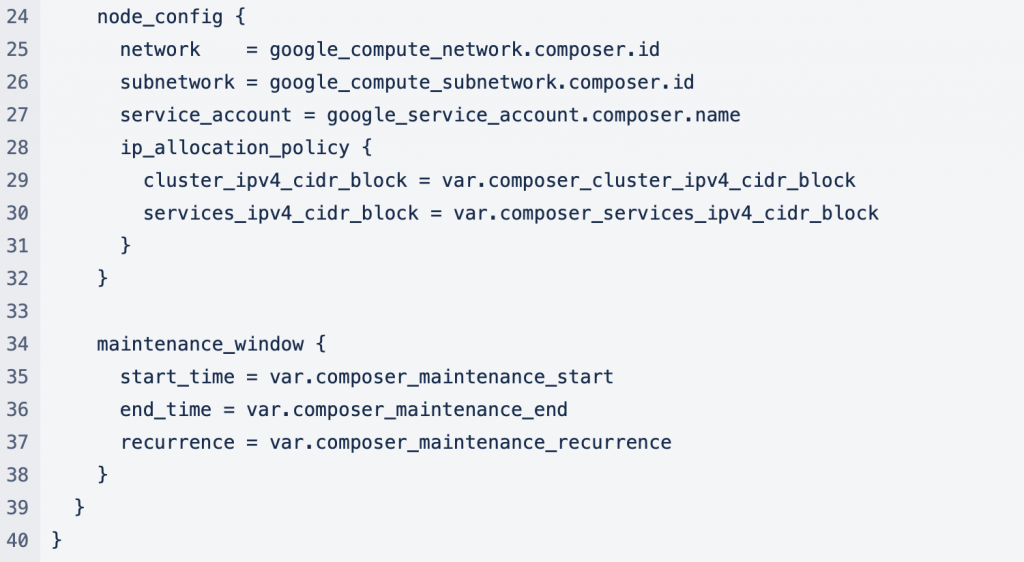

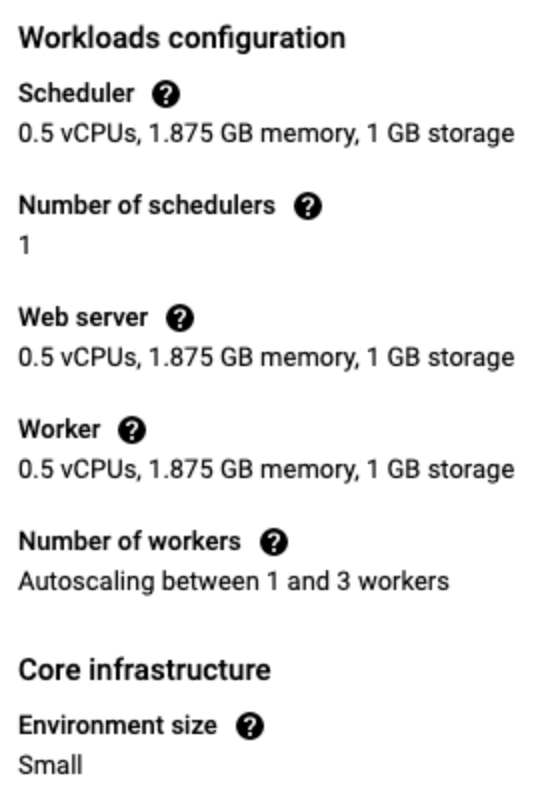

The configuration Cloud Composer starts with the image versions to use. The image version defines the Cloud Composer version that is created and the Airflow version and Python version. Google publishes the Cloud Composer image versions here https://cloud.google.com/composer/docs/concepts/versioning/composer-versions . Next is the environment size, which defines the resource configuration for the scheduler, web server, and workers.

The predefined configuration can be customized, which means all the resource configurations would have to be added to the Terraform resource configuration.

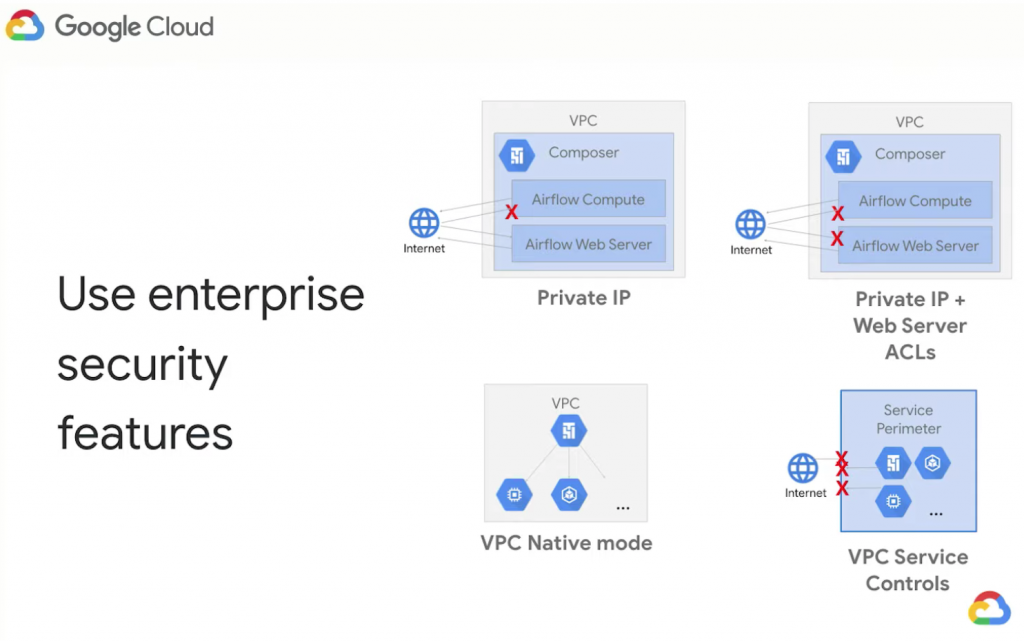

Google cloud composer provides enterprise security with several security configuration options.

For this development environment, Cloud Composer deployment, we choose to deploy Cloud Composer in a Private IP strategy with VPC-Native mode. The GKE deployed with Cloud Composer is deployed as a private cluster, Cloud Composer is deployed to a private network, and the Cloud SQL deployed with Cloud Composer is deployed to a private network. Only public or internet access allowed is to the Apache Airflow Web Server with SSO managed by Google IAM. To accomplish such configuration, we define private_environment_config and node_config in the Terraform Cloud Composer resource config. Lastly, we define a maintenance window to allow for system upgrades.

Once you execute the Terraform script with

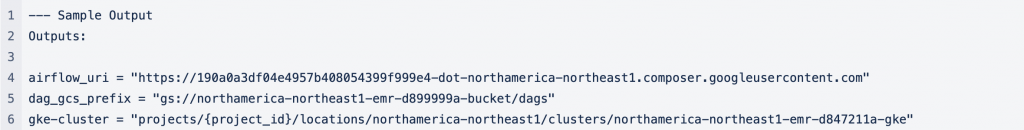

after about 30 min the networking, GKE, Cloud SQL, AirFlow Web Server, and Cloud Composer will be deployed and ready to use. To support further processing we define output Terraform variables that provide feedback from the deployment.

The outputs provide the information about the GKE cluster, AirFlow Web Server URL, and the Google Storage bucket where the AirFlow DAG should be stored.

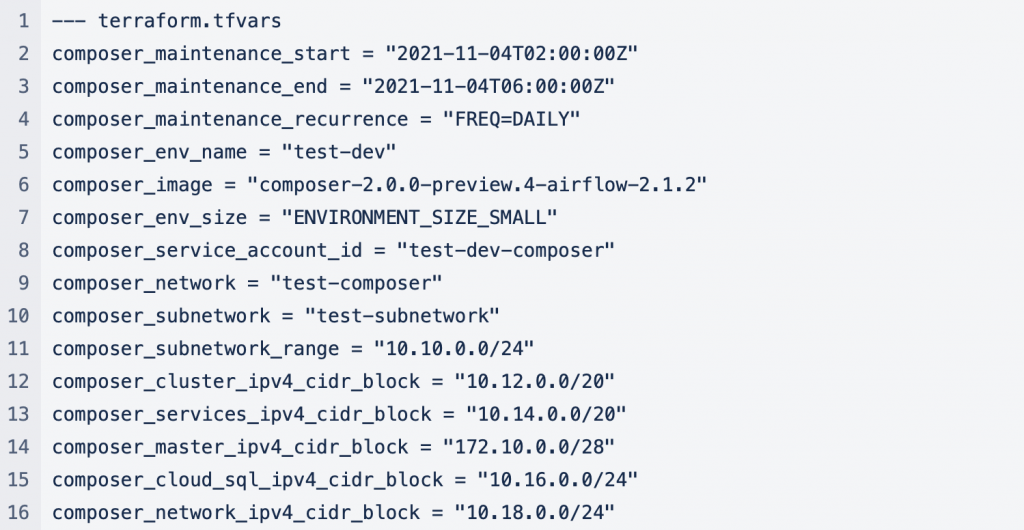

There are many variables used in the Terraform script defined in the variables.tf file. Here we list some of the most relevant values for these variables.

The Terraform deployment allows us to deploy data pipelines infrastructure fast, securely, and with different configurations for production deployment. It is a part of a broader CI/CD pipeline that focuses on delivering value to Translucent clients via efficient DevSecOps.

December 10th, 2021

by Patryk Golabek in Data-Driven, Technology

See more:

December 10th, 2021

Cloud Composer – Terraform Deployment by Patryk Golabek in Data-Driven, Technology

December 2nd, 2021

Provision Kubernetes: Securing Virtual MachinesAugust 6th, 2023

The Critical Need for Application Modernization in SMEs